Kubernetes Services

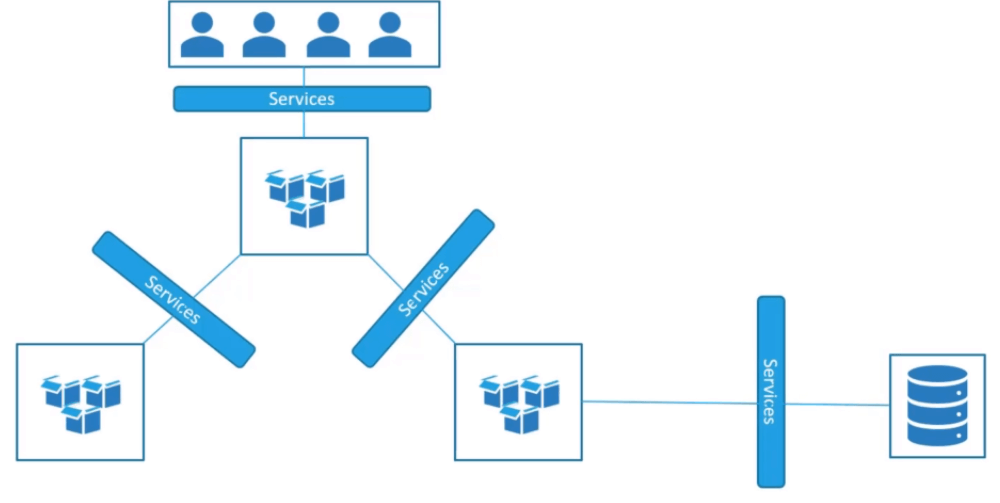

Kubernetes Services enable communication between various components within and outside of the application. Services helps us connect applications together with other applications or users.

For example, your application has groups of PODs running various sections. Such as a group for serving a front end loads to users, another group running back end processes and third group connecting to external data stores.

Services enable the connectivity between these groups of PODs. Services enable the front end applications to be made available to the end users. It enables communication between front end and back end PODs and helps us establishing the connectivity to external data stores.

The Kubernetes Services enable loose coupling between micro services in our application.

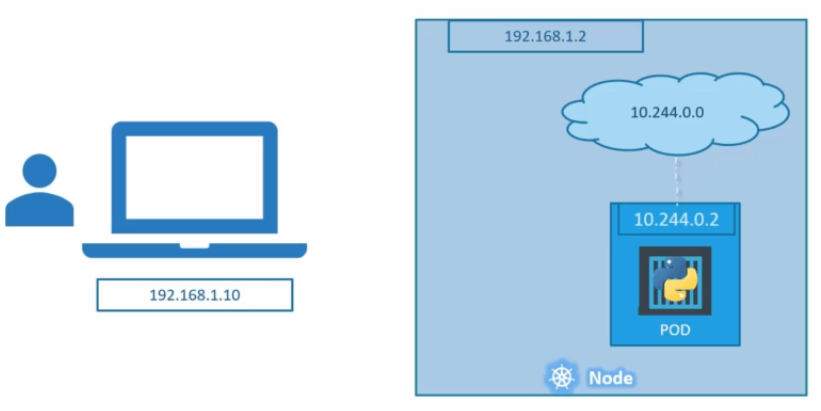

Now take a look at one use case of services. Lets talk about external communication. So we deployed a POD having a web application running on it. How do an external user access the web page?

First of all, lets look at the existing setup. The Kubernetes node has an IP address i.e., 192.168.1.2 and my laptop is on the same network as well. So it has an IP address 192.168.1.10, the internal POD network range is 10.244.0.0 and the POD has an IP address 10.244.0.2.

Here clearly I can’t access the POD IP address 10.244.0.2 as it is separate network. So what are the options to access application web page?

First we connect ssh to the Kubernetes node with IP address 192.168.1.2 and from that node we can access the webpage using POD IP address. But this is only with in the Kubernetes node and that’s not what we really want.

I want to be able to access the application web page from my laptop without connecting to ssh to Kubernetes node. I just want to access my application web page with Kubernetes node IP address. So here we need something required to access your application web page. This is where Kubernetes Services comes in to play.

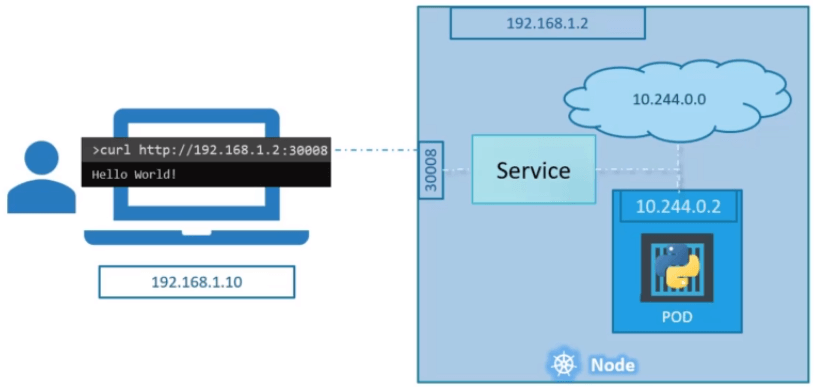

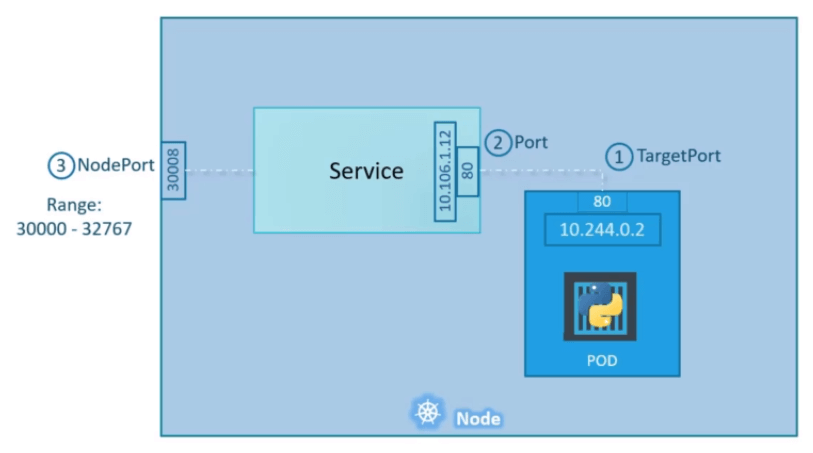

A Kubernetes Service is an object just like PODs, Replica Sets, Deployments etc. One of its use case is to listen to a port on the node and forward requests on that port to a port on the POD running the web application. This type of service is known as Node Port Service, because the service listens to a port on the node and Forward Requests.

There are other types services available which we now discuss.

Kubernetes Service Types

1. NodePort Service

A NodePort service is the most primitive way to get external traffic directly to your service. NodePort, as the name implies, opens a specific port on all the Nodes (the VMs), and any traffic that is sent to this port is forwarded to the service.

The YAML for a NodePort service looks like this

apiVersion: v1

kind: Service

metadata:

name: myapp-service

spec:

selector:

app: myapp

type: front-end

type: NodePort

ports:

- name: http

port: 80

targetPort: 80

nodePort: 30008

protocol: TCPNow you can access your application we page with 30008 port.

There are many disadvantages to this method.

- You can only have one service per port

- You can only use ports 30000–32767

- If your Node/VM IP address change, you need to deal with that

For these reasons, I don’t recommend using this method in production to directly expose your service. If you are running a service that doesn’t have to be always available, or you are very cost sensitive, this method will work for you. A good example of such an application is a demo app or something temporary.

2. ClusterIP Service

This Service creates a virtual IP inside the cluster to enable communication between different services such as a set of front end servers and a set of back end servers. A ClusterIP service is created automatically, and the NodePort service will route to it. The service can be accessed from outside the cluster using the NodeIP:nodePort.

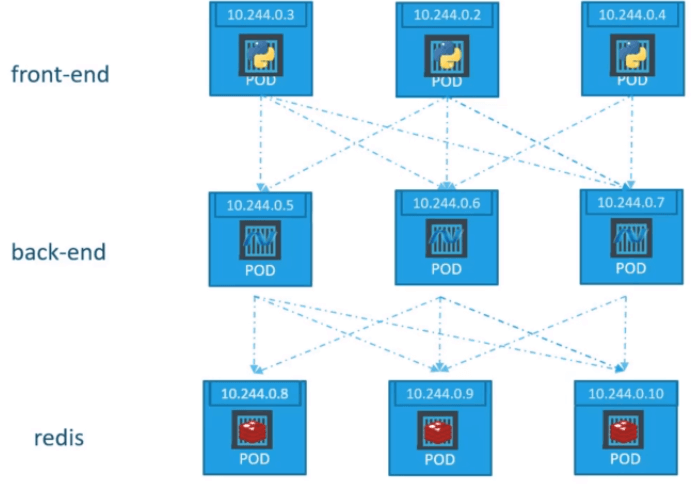

For example, a full stack web application has different kinds of PODs. You may have a number of PODs running front end web server. A number of PODs running back end servers and another set of PODs running key value store like redis.

The web front end server needs to communicate with the back end servers and back end servers need to communicate with the redis servers. So what is the right way to establish the connectivity between these services.

The PODs all have an IP address assigned to them as we can see on above picture. These IP address are not static addresses. These PODs may go down any time and create new PODs all the time. So we can’t depend on IP addresses for internal communication between these applications.

Also what if the front end POD 10.244.0.3 need to connect a back end service which of the three would it go to and who makes that decision.

A Kubernetes service can help us group the PODs together and provide the single interface to access the PODs in the group. For example, a service created for back end PODs will help group all backend PODs together and provide a single interface for other PODs to access the service.

The requests are forward to one of the POD under the service randomly. Similarly create the additional services for redis and allow the back end PODs to access the redis systems through the service.

This enables us to easily and effectively deploy a micro service based applications on Kubernetes cluster. Each layer can now scale or move as required without impacting communication between the various services.

Each service gets an IP, a name assigned to it inside the cluster and that is the name that should be use by other PODs to access the service. This type of service is known as ClusterIP service.

The YAML for a NodePort service looks like this

apiVersion: v1

kind: Service

metadata:

name: back-end

spec:

selector:

app: myapp

type: back-end

type: ClusterIP

ports:

- name: http

port: 80

targetPort: 803. LoadBalancer Service

A LoadBalancer service is the standard way to expose a service to the internet. This service provisions a load balancer for your application in support to cloud providers. The external load balancer routes to your NodePort and ClusterIP services, which are created automatically.

If you want to directly expose a service, this is the default method. All traffic on the port you specify will be forwarded to the service. There is no filtering, no routing, etc. This means you can send almost any kind of traffic to it, like HTTP, TCP, UDP, Websockets, gRPC, or whatever.