Kafka Topics and Partitions

In this tutorial, we are going to discuss Apache Kafka topics and Partitions. Understanding topics and partitions help you learn Kafka faster. This tutorial walks through the concepts, structure, and behavior of Kafka’s partitions.

What is a Kafka Topic?

Similar to how databases have tables to organize and segment datasets, Apache Kafka uses the concept of topics to organize related messages. So Kafka’s topic is like a table in a database but without all the constraints. Because you send whatever you want to a Kafka topic. There is no data verification.

Unlike database tables, Kafka topics are not query-able. Instead, we have to create Kafka producers to send data to the topic and Kafka consumers to read the data from the topic in order.

Kafka topics are a particular stream of data within your Kafka cluster. Kafka cluster can have many topics. It could be named, for example, logs, purchases, Twitter tweets, truck GPS, and so on.

As shown in the above image, A Kafka topic is identified by its name. For example, we have a topic called logs that may contain log messages from our application, and another topic called purchases that may contain purchase data from our application as it happens.

Kafka topics can contain any kind of message in any format, and the sequence of all these messages is called a data stream. And this is why Kafka called a data streaming platform. Because you make data stream through topics.

Data in Kafka topics are deleted after one week by default (also called the default message retention period), and this value is configurable. This mechanism of deleting old data ensures a Kafka cluster does not run out of disk space by recycling topics over time.

What are Kafka Partitions?

Kafka topics are broken down into a number of partitions. A single topic may have more than one partition, it is common to see topics with 100 partitions.

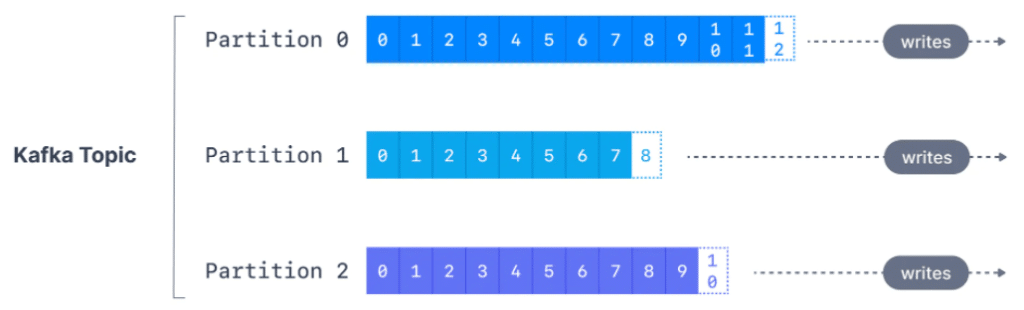

The number of partitions of a topic is specified at the time of topic creation. Partitions are numbered starting from 0 to N-1, where N is the number of partitions. In the following example, I’m going to have a Kafka topic with three partitions, Partition 0, 1, and 2.

Now the messages sent to the Kafka topic are going to end up in these partitions, and messages within each partition are going to be ordered. So, my first message into partition 0 will have the id 0, 1, and then 2 and then all the way up to 11. And then as I keep on writing messages into my partition, this id is going to increase.

So this is the same case when I go and write data into partition 1 of my Kafka topic, the id will keep on increasing and so on. So, the messages in these partitions where they are written are getting an id. That’s incrementing from zero to whatever. And this id is called a Kafka partition offset.

So as we can see, each partition has different offsets. Kafka’s topics are immutable. That means, once data is written to a partition, it cannot be changed. So we cannot delete data in Kafka, also you cannot update data in Kafka. You have to keep on writing to the partition.

Kafka Topic example

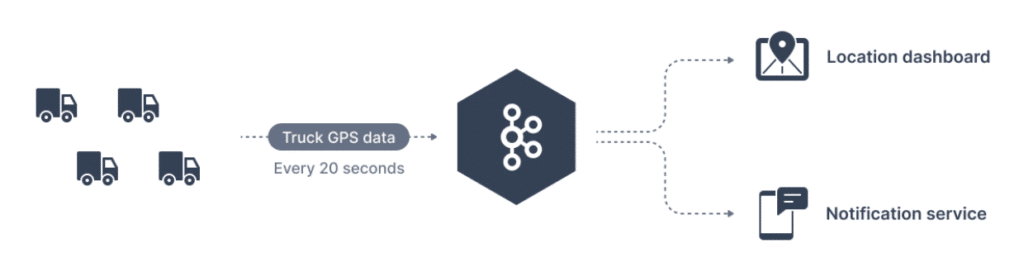

So now let’s take the example of truck GPS. So say you have a fleet of trucks and each truck has a GPS, and the GPS reports its position to Apache Kafka. Then each truck will send a message to Kafka every 20 seconds, for example, and each message will contain some information such as the truck ID and the truck position (latitude and longitude).

So we have a bunch of trucks and are going to be data producers, and they will send data into a Kafka topic named trucks_gps that will contain the positions of all trucks. So the topic sends the data into the trucks_gps topic and then because the topic is made of partitions that we have discussed. We choose to create a topic with 10 partitions. Now that’s an arbitrary number, and I will discuss you how later on in these tutorials. How to select the number of partitions for your topic. So once this topic is created in Kafka, we want to have consumers that will consume that trucks_gps data and send it in to a location dashboard. So we can track the location of all our trucks in real-time. Or maybe we also want to have a notification service consume the same stream of data. And that notification service will, for example, send notifications to the customers when the delivery is closed.

So this is why Kafka is very helpful because well multiple services are reading from the same stream of data.

What are Kafka Offsets?

The offset is an integer value that Kafka adds to each message as it is written into a partition. Each message in a given partition has a unique offset.

Apache Kafka offsets represent the position of a message within a Kafka Partition. Offset numbering for every partition starts at 0 and is incremented for each message sent to a specific Kafka partition. This means that Kafka offsets only have a meaning for a specific partition, e.g., offset 3 in partition 0 doesn’t represent the same data as offset 3 in partition 1.

If a topic has more than one partition, Kafka guarantees the order of messages within a partition, but there is no ordering of messages across partitions.

Even though we know that messages in Kafka topics are deleted over time (as seen above), the offsets are not re-used. They continually are incremented in a never-ending sequence.

Important notes

- Kafka topics are immutable: once data is written to a partition, it cannot be changed

- Data in Kafka topics are kept only for a limited time (default is 1 week). But it is configurable.

- Offset only have a meaning for a specific partition.

For example, Offset 3 in partition 0 doesn’t represent the same data as offset 3 in partition 1. - Offsets are not going to be re-used even if previous messages have been deleted. It keeps on increasing incrementally one by one as you send messages to your Kafka topic.

- The order of messages is guaranteed only within a partition but not across partitions.

- Data is going to be assigned to a random partition unless a key is provided.