InputSplit

InputSplit in Hadoop MapReduce is the logical representation of data. It describes a unit of work that contains a single map task in a MapReduce program. Hadoop InputSplit represents the data which is processed by an individual Mapper. The split is divided into records. Hence, the mapper process each record (which is a key-value pair).

MapReduce InputSplit length is measured in bytes and every InputSplit has storage locations (hostname strings). MapReduce system use storage locations to place map tasks as close to split’s data as possible. Map tasks are processed in the order of the size of the splits so that the largest one gets processed first (greedy approximation algorithm) and this is done to minimize the job run time. The important thing to notice is that Inputsplit does not contain the input data; it is just a reference to the data.

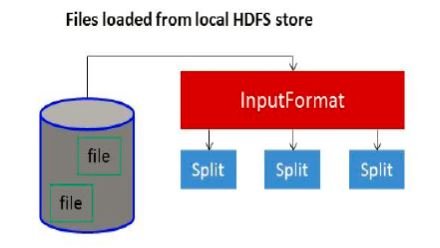

As a user, we don’t need to deal with InputSplit directly, because they are created by an InputFormat (InputFormat creates the Inputsplit and divide into records).

FileInputFormat, by default, breaks a file into 128MB chunks (same as blocks in HDFS) and by setting mapred.min.split.size parameter in mapred-site.xml we can control this value or by overriding the parameter in the Job object used to submit a particular MapReduce job. We can also control how the file is broken up into splits, by writing a custom InputFormat.

Change split size in Hadoop

InputSplit in Hadoop is user defined. User can control split size according to the size of data in MapReduce program. Thus the number of map tasks is equal to the number of InputSplits.

The client (running the job) can calculate the splits for a job by calling ‘getSplit()’, and then sent to the application master, which uses their storage locations to schedule map tasks that will process them on the cluster. Then, map task passes the split to the createRecordReader() method on InputFormat to get RecordReader for the split and RecordReader generate record (key-value pair), which it passes to the map function.