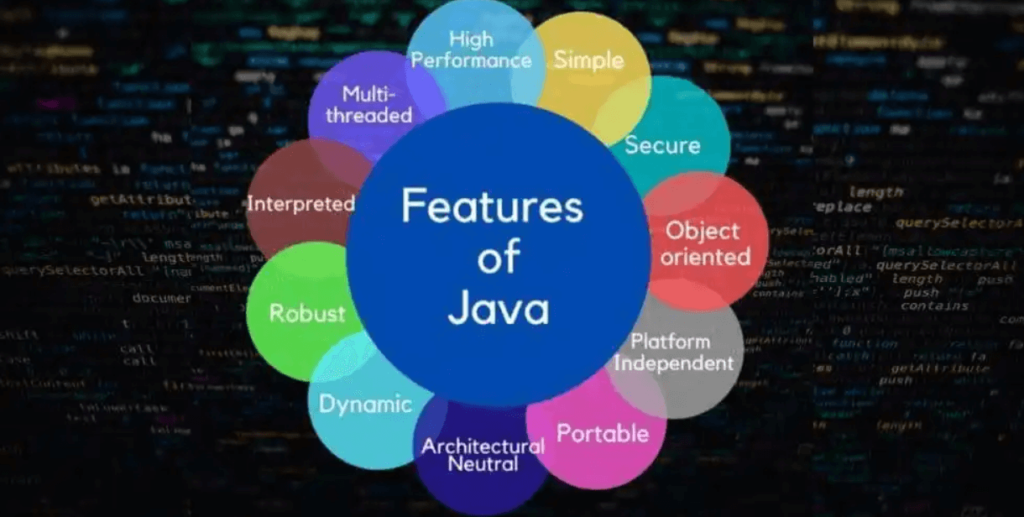

Java Features

In this tutorial, we are going to discuss Java features. Java has become a popular and functional programming language because of its excellent features, which play a vital role in contributing to the popularity of this language. The Java features are called “Java BuzzWords”.

Sun MicroSystems officially describes Java with the following list of features:

1. Object Oriented

Any programming language called object-oriented language, then that language must be satisfied the following four principles.

- Encapsulation

- Abstraction

- Polymorphism

- Inheritance

E.g: CPP, Java

The languages that are not supporting inheritance and dynamic Polymorphism are called “Object-based languages“.

E.g: VB, Java Script

Why C++ is partially OOP?

- According to the pure OOP principle, no main() method/any method should exist without the object. In C++, the main() method can be independent and doesn’t need any class.

- C++ provides “Friends”, which is absolute corruption to the OOP principle of “Encapsulation”.

- According to the OOP principle, everything needs to be an object. C++ provide inbuilt data types int, float etc., which do not object in their nature. C# and Java also provides some data type, but its inner presentation is always Object. For example, in java, you have got wrapper classes. All these are derived from Object.

- According to the OOP principle, one object should have only one hierarchical parent reference. But in C++, multiple inheritance contradicts its principle.

- In Java, must write any function inside of the class. Without class, program creation is not possible. Such types of languages are called total OOP. Java supports this principle.

2. Java is multi threading

Multithreading means can do more than one action at the same time within a program.

3. Java is platform independent

If the application compiled code can run in different operating systems, that application is platform-independent. The programming language that is used to develop this application is called platform-independent programming language. Java is a platform-independent programming language because java program compiled code can run in all operating systems.

Platform: A platform is a hardware or software environment in which a program runs.

Software Platform: The software platform is used to convert the program into an executable format.

E.g: Java, .Net

Hardware Platform: The hardware platform is mainly used to executing the statements with the help of a processor.

E.g: Operating System

Java programming language comes under software platform. Which consisting three portions

- JDK (Java Development Kit)

- JRE (Java Runtime Environment)

- JVM (Java Virtual Machine)

- JDK also called as SDK (Standard Development Kit)

- JDK provides the environment to develop and run Java applications.

- JRE provides an environment only to run java applications. For example, you installed a java application in the client machine, and then the client is then responsible for run the java application, not responsible for developing a java application. So here, JRE is required.

- JVM is responsible for running our java application line by line. So here, JVM is the interpreter.

Source Code: Developer written program and it is written according to the programming language syntax.

Compiled Code: Compiler generated code converted from source code.

Compiler: Converts the source code into machine language at once.

Interpreter: Converts the source code into machine language but line by line.

Executable Code: Operating System understandable code (.exe files)

4. Java is portability

Portability refers to the ability to run a program on different machines. Java byte code (.class file) run at any environment by JVM.

5. Java is robust

Robust means were vital. Java uses strong memory management. A lack of pointers avoids security problems, an automatic garbage collector in Java, an exception handling and a type checking mechanism in Java. All these points make Java is robust.

Java programs will occupy the same memory on all operating systems that mean in windows, integer takes 4 bytes; on Linux and Solaris also it will occupy the same memory. So that memory management is the same; hence on one operating system, the program is executing, the same program will execute on any operating system. That’s why the java program is reliable.

6. Java is architectural neutral

A language or technology is architectural neutral and can run in any available processor in the real world. The languages like C, C++ are treated as an architectural dependent. A language like Java can run on any of the processors. Irrespective of their architecture and vendor.

Portability = Platform Independent + architectural neutral7. Java is distributed

A distributed service is one that runs on multiple servers and can be accessed by many clients around the world. An architecture called trusted network architecture is required to develop distributed applications. We need a technology called J2EE to develop these applications.

That’s all about Java Features. If you have any queries or feedback, please write us at contact@waytoeasylearn.com. Enjoy learning, Enjoy Java..!!